An AI to kill us all, and in the darkness bind us

The increasing application of Artificial Intelligence in military operations is alarming — even more so when it comes to nuclear weapons

Remember WarGames? The classic 1983 film about nuclear tension during the 80s warned us about the dangers of a fully automated, computerised system taking control of the American nuclear arsenal. A talented teenage hacker inadvertently entered the system and almost started a thermonuclear war — a scenario that was not at all farfetched.

The following year saw the release of The Terminator, one of the greatest sci-fi films ever (what a time to go to the cinema; I remember). A cyborg travels back in time to eliminate Sarah Connor, an everyday person who would end up being crucial to humankind’s future: she would give birth to the leader of a resistance against the machines that destroyed the world in a nuclear Armageddon. The Artificial Intelligence Skynet had become so smart that it decided to get rid of those pesky humans once and for all. Only a few survived, led by John Connor, Sarah’s son.

In the sequel, Terminator 2: Judgment Day (1991), Skynet is about to become operational, and a similar cyborg from the future (now one of the good guys) tries to prevent it from happen — if not, the system will attack Russia, which will retaliate, and boom, we’re done.

The sequels, prequels, and reboots that followed ruined the franchise (the lesson is: never mess with timelines) — but the first two Terminators still stand as masterpieces of the genre.

Careful with that axe, Eugene

This all makes for great cinema, but horrific reality. And even though there is a healthy dose of scepticism about the prospects for “self-aware” AI — whatever that means —, the systems we have today, and the ones in the foreseeable future, can do an awful lot of damage.

An alarm was recently sounded by a statement undersigned by scientists, policymakers, AI experts, and many other public figures. Here it is in full:

“Mitigating the risk of extinction from AI should be a global priority alongside other societal-scale risks such as pandemics and nuclear war.”

Is this just fearmongering, at a moment when the world is already chockful of life-threatening crises? It’s pretty vague anyway. But the purpose is to “open up discussion” — and whatever we think of the true dangers, or the signatories’ motivations, it’s high time for this topic to be discussed.

Back to the programming board

Like it or not, AI is already being heavily employed in military matters, not only in planning, but also in enemy location, target selection, and (alleged) precision strikes by missiles, artillery, and mostly drones. One recent example is Project Maven, an American initiative partly supported by Google and Palantir, two of the high-tech companies who live in bed with the MICIMATT.

The war in Ukraine was admittedly used as a “laboratory” for the project, based on machine learning techniques to locate Russian troops and identify targets. Unfortunately for the Ukrainians, it failed to make significant changes in the course of the war — on the contrary, some blame it (among other factors) for the fiasco of last summer’s “counteroffensive”. Even the Ukrainian press acknowledges that the experiment had “mixed results”.

Acronyms on fire

Project Maven is part of an ambitious plan for the automation of some of the decision-making processes in the armed forces. When it comes to nuclear weapons detection and use, AI is an important element in the upgrading of the N3C (nuclear command, control, and communications) infrastructure, especially concerned with early warning systems and the capacity for effective retaliation, if need be.

The Pentagon is developing the Joint All-Domain Command and Control (JADC2) project,

“a computer-driven system for collecting sensor data from myriad platforms, organizing that data into manageable accounts of enemy positions, and delivering those summaries at machine speed to all combat units engaged in an operation.”

Nuking for peace

Plans like these must obviously be undertaken with utmost care, and you don’t even need to resort to sci-fi films to understand the perils, one of which is

“the lack of real world data for use in training NC3 algorithms. Other than the two bombs dropped on Japan at the end of World War II, there has never been an actual nuclear war and therefore no genuine combat examples for use in devising reality-based attack responses. War games and simulations can be substituted for this purpose, but none of these can accurately predict how leaders will actually behave in a future nuclear showdown. Therefore, decision-support programs devised by these algorithms can never be fully trusted.”

One glaring example of this limitation, thankfully more at the level of dark humour than of imminent implementation, was provided by simulations in which AI models decided to almost immediately go at it and turn the whole world into a radioactive wasteland. Because, well, why not?

The “reasoning” provided by the software in some cases was that nuclear war would bring the desired “peace in the world”. Without humans around, there would indeed be peace. It’s a way to look at things — not very promising, though.

This scenario was eerily anticipated by Colossus: The Forbin Project, from 1970. The film tells the story of a supercomputer named Colossus that is put in charge of the US defence system. It soon discovers that there is a Soviet counterpart, and together they plot to destroy the world.

Another troubling issue regarding the widespread introduction of AI in military affairs is what is known as “automation bias”, an apparently inescapable feature of the interaction between people and machines. It is a clear and present danger, rooted in human psychology.

“Merely having humans in the loop will not be enough to ensure effective human decision-making. Human operators frequently fall victim to automation bias, a condition in which humans overtrust automation and surrender their judgment to machines. Accidents with self-driving cars demonstrate the dangers of humans overtrusting automation, and military personnel are not immune to this phenomenon. To ensure humans remain cognitively engaged in their decision-making, militaries will need to take into account not only the automation itself but also human psychology and human-machine interfaces.”

These problems were already manifested during the illegal and murderous invasion of Iraq in 2003:

“In 2003, U.S. Army Patriot air and missile defense systems shot down two friendly aircraft during the opening phases of the Iraq war. Humans were in the loop for both incidents. Yet a complex mix of human and technical failures meant that human operators did not fully understand the complex, highly automated systems they were in charge of and were not effectively in control.”

The conclusion is that even if full automation is the stuff of science fiction, the use of AI already involves great risks. Apparently, this was the motivation for the Political Declaration on Responsible Military Use of Artificial Intelligence and Autonomy, released by the American government and signed by 52 countries as of February this year (but not yet by nuclear powers China, Russia, North Korea, Israel, India, and Pakistan). Nevertheless, the document was deemed too timid in addressing the subject.

AI-assisted massacre

A recent, and criminal, use of military AI made the headlines with the disclosure of Lavender, a system employed by Israel to identify and target Palestinian combatants in Gaza (as well as people only suspected of being so). According to an investigation by Israeli journalists, more than 30,000 people were identified as possible targets. The calculus of acceptable “collateral damage” was shocking in its inhumanity. According to the independent magazine +972,

“in an unprecedented move, according to two of the sources, the army also decided during the first weeks of the war that, for every junior Hamas operative that Lavender marked, it was permissible to kill up to 15 or 20 civilians … The sources added that, in the event that the target was a senior Hamas official with the rank of battalion or brigade commander, the army on several occasions authorized the killing of more than 100 civilians in the assassination of a single commander.”

In the words of one of the sources quoted by the magazine, ‘once you go automatic, target generation goes crazy’.

Now imagine this, but with nuclear weapons.

Everybody’s at it

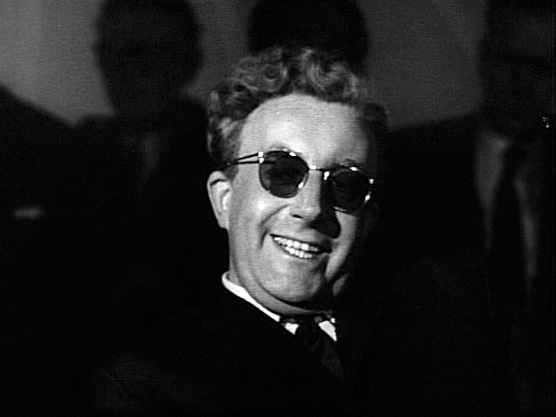

Americans and their allies are not the only countries investing in automated systems for military operations. Arguably, the pioneering effort was made by the Soviet Union, which developed the Perimeter, also known as Dead Hand. Like the Doomsday Machine of Dr. Strangelove, in the case of a “decapitating” attack on the Soviet leadership the whole nuclear arsenal would be unleashed. The main difference with the film, however, is that the Dead Hand was not fully automatic. Was, or is? It’s not clear if the system is still in use.

You can find more details here:

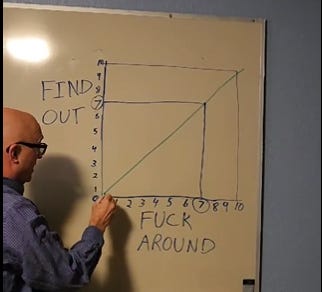

The rush to automation, even with humans “in the loop”, is evidently dangerous — and we certainly don’t know most of what’s being planned or put into practice. People must demand full disclosure from their governments and tech companies. Of course this is not going to happen, but it’s worth trying anyway, because the situation is highly threatening — especially because, as David Lightman said,

And he was right.